TFS TF400917 – Upgrading TFS to move to Azure Devops

A customer at work had an issue upgrading from TFS 2017 to TFS 2019 with a view to moving to Azure DevOps today, so I thought I would blog the issue and the fix in case anyone else runs into the same sort of issue. I learned how to resolve such issues like the one we had shown below: –

TF400917: The current configuration is not valid for this feature was the error message, googling takes you to this link: – https://docs.microsoft.com/en-us/azure/devops/reference/xml/process-configuration-xml-element?view=azure-devops-2020

From here we can download and run a tool called witadmin, you can read more here -> https://docs.microsoft.com/en-us/azure/devops/reference/witadmin/witadmin-customize-and-manage-objects-for-tracking-work?view=azure-devops-2020

If you downlod this tool and export the config like so:-

witadmin exportprocessconfig /collection:CollectionURL /p:ProjectName [/f:FileName]We can export the processconfig and check for invalid items, in our case there was duplicate State values within the xml. So I exported the xml and made a change by hand and then imported the file with the removed duplicate item using the following command: –

witadmin importprocessconfig /collection:CollectionURL /p:ProjectName [/f:FileName] /vFor both of the commands above you have to supply the CollectionURL, ProjectName and a FileName and then by importing the config this fixed the issue. The devil here is in the detail, find the invalid details, in our case it was a duplicate State of Completed, I had to remove one and save, nope not that one, so I added it back in and removed the other and re-imported the config – problem solved.

Note

You can also download an add-on for Visual Studio which can help with the task of migrating from TFS to Azure DevOps which is called the TFS Process Template Editor. The link to download this is https://marketplace.visualstudio.com/items?itemName=KarthikBalasubramanianMSFT.TFSProcessTemplateEditor

With the above tool, you can visualize the config for your TFS setup which can help you see what’s going on under the hood a little better – a useful tool!.

Kudos to https://twitter.com/samsmithnz for telling me about this – Sam rocks!

Recently Microsoft open sourced the

Recently Microsoft open sourced the  The general tab is for creating projects in Azure DevOps from existing project templates, this will give you full source code, build and release pipelines, wikis, example kanban boards with issues etc and more

The general tab is for creating projects in Azure DevOps from existing project templates, this will give you full source code, build and release pipelines, wikis, example kanban boards with issues etc and more

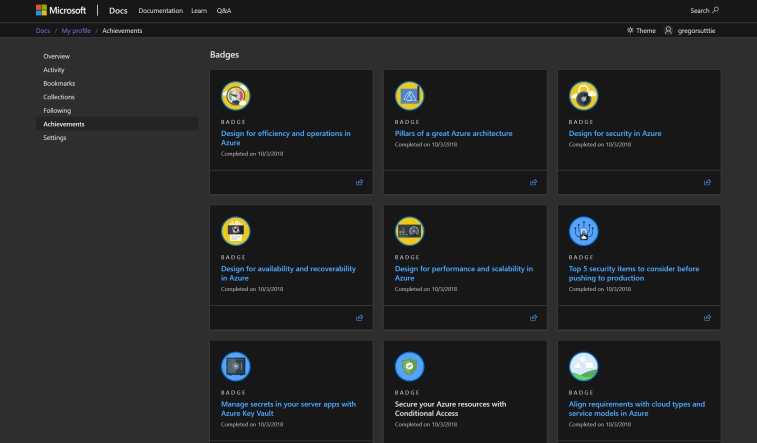

Using Microsoft Learn we can learn how to do things like: –

Using Microsoft Learn we can learn how to do things like: –